6.9: 定容量热法

量热计可用于确定化学反应释放或吸收的热量。咖啡杯量热仪设计为在恒定(大气压)下运行,并且方便地测量伴随在恒定压力下在溶液中发生的过程的热流(或焓变)。以恒定体积运行的另一种量热计(俗称炸弹量热计)用于测量由产生大量热量和气态产物的反应(例如燃烧反应)产生的能量。 (“炸弹”一词来自这样的观察,即这些反应可能足够剧烈,就像爆炸会损坏其他热量计一样。)热力学第一定律表明,反应的内能变化(Δ E )是热量( q )和功( w )。

在气态反应中,所完成的工作是压力-体积类型,因此会导致反应体积发生变化。

炸弹量热仪设计为在恒定体积下运行,因此不允许改变反应体积(Δ V = 0)。

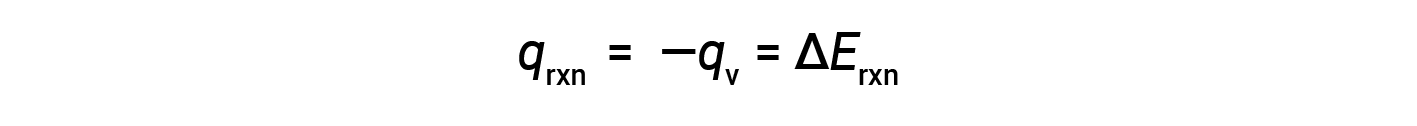

因此,完成的功为零,使用炸弹量热仪测量的热量( q v )等于反应内部能量的变化。< / p>

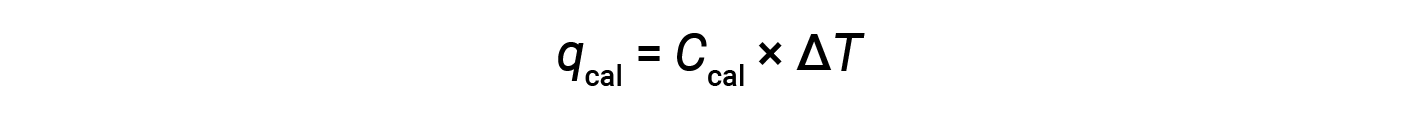

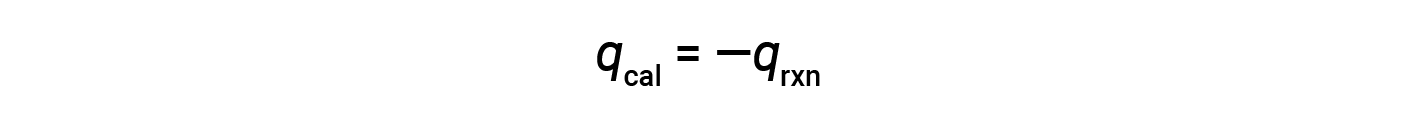

由于量热仪是绝热的,没有热量散失到环境中,因此量热仪获得的热量等于反应释放的热量。

由于体积条件恒定,反应中放出的热量与内部能量的变化相对应。

这是经历燃烧的特定量反应物的内部能量变化。通过将值除以实际反应的摩尔数,可以得到每摩尔特定反应物的ΔErxn。

炸弹量热仪需要校准以确定量热仪的热容量并确保准确的结果。校准使用已知q值的反应完成,例如在反应前后称重的镍熔丝产生的火花点燃的苯甲酸的测定量。由已知反应产生的温度变化用于确定量热仪的热容量。通常每次使用量热仪收集研究数据之前都要进行校准。

本文改编自 Openstax,化学2e,第5.2节:量热法。 / a>

Tags

Constant Volume Calorimetry

Internal Energy Change

Heat Transfer

Work Measurement

Gaseous Chemical Reactions

Bomb Calorimeter

Coffee Cup Calorimeter

Naphthalene

Combustion Reaction

Surroundings

Temperature Change

Heat Capacity