Research and Development of High-performance Explosives

Summary

Developmental testing of high explosives for military applications involves small-scale formulation, safety testing, and finally detonation performance tests to verify theoretical calculations. This paper will share typical development tests associated with the measurement of detonation velocity and detonation pressure.

Abstract

Developmental testing of high explosives for military applications involves small-scale formulation, safety testing, and finally detonation performance tests to verify theoretical calculations. For newly developed formulations, the process begins with small-scale mixes, thermal testing, and impact and friction sensitivity. Only then do subsequent larger scale formulations proceed to detonation testing, which will be covered in this paper. Recent advances in characterization techniques have led to unparalleled precision in the characterization of early-time evolution of detonations. The new technique of Photonic Doppler Velocimetry (PDV) for the measurement of detonation pressure will be shared and compared with traditional fiber-optic detonation velocity and plate-dent calculation of detonation pressure. In particular, the role of aluminum in explosive formulations will be discussed. Recent developments led to the development of explosive formulations that result in reaction of aluminum very early in the detonation product expansion. This enhanced reaction leads to changes in the detonation velocity and pressure due to reaction of the aluminum with oxygen in the expanding gas products.

Introduction

Development of high explosives for military use involves extensive safety considerations and resource limitations due to test facility requirements. At the US Army Armament Research and Development and Engineering Command (ARDEC), Picatinny Arsenal, explosives are evaluated from the research level through full lifecycle monitoring and demilitarization. New explosives that are safer for handling, storage, and loading are continuously evaluated in an effort to provide effective and safe munitions for the warfighter. Recent law dictates that whenever possible, Insensitive Munitions (IM) guidelines and requirements are followed. Therefore, whenever new explosives are synthesized and formulated, performance testing is paramount to ensure they meet user requirements. In this context, the measurement of detonation properties of newly developed PAX-30 is compared with the PBXN-5, a traditional high performance explosive. In particular, measurement of its detonation velocity and detonation pressure, which are important for verification of theoretical models and performance calculations, is shared. The PAX-30 was developed to replace legacy explosives such as PBXN-5 by using reactive aluminum.

Aluminum possesses a high enthalpy of oxidation as aluminum on a per molar basis:

2Al + 3/2 O2 -> Al2O3 (1,670 kJ/mol)

By adding aluminum in place of the shock sensitive explosive ingredients, the formulation is rendered more safe to external shock and hazard insults. This effectively helps fulfill Insensitive Munition (IM) United Nation requirements while at the same time maintaining the performance necessary for military applications.2,3.4

The facilities to test such items are unique and highly specialized. Some initial tests are performed to screen explosives before handling in large quantities. These tests include thermal characterization with differential scanning calorimetry (DSC) and impact and friction tests. For the DSC tests, a small test sample is heated at a constant rate in an inert atmosphere, and the amount and direction of heat flow is monitored. For impact and friction tests, the sample is subjected to insults from a standardized falling weight (Bundesanstalt fur Materialprufung, or BAM Impact), and for the friction test a standardized ceramic pin and plate (Bundesanstalt fur Materialprufung, or BAM Friction).5

Once the formulations are deemed safe for handling, further scale-up is accomplished by proprietary mixing technologies. In short, high explosives fall into three categories:

Melt-cast, in which the binder is a melt-phase material like a wax, trinitrotoluene (TNT), dintroanisole (DNAN), or other meltable material. Energetic or fuel solids can be incorporated with careful consideration of particle size and compatibility.

Cast-cure, in which the binder is a castable polymer, such as hydroxyl-terminated polybutadiene (HTPB), polyacrylate, or other epoxy-type plastic that is liquid in its unreacted state, but upon initiation solidifies to a solid. Solids are incorporated into the matrix during its liquid state.

Pressed, in which the solids loading is very high, often approaching nearly 95% by weight, with a binder that is added to coat the solids using a lacquer or extrusion process.

Once pressed or cast, the materials are machined using standard methodologies to obtain proper geometry for a desired test. In this paper, PAX-30 and PBXN-5 are high performance pressed explosives. The formulations are made through a slurry-coating process, in which energetic nitramine crystals (HMX, RDX, or CL-20) and aluminum particles are suspended in an aqueous solution. A lacquer with the proprietary binder is then added. Upon lacquer addition, the polymer coats the explosive crystals, the suspension is heated under vacuum to drive off the solvent, and the particles are then filtered and dried. The granule-like particles are then pressed to the configuration desired.

Detonation Velocity

In order to determine the detonation velocity, one must monitor the arrival of the detonation front in the material. A detonation is defined as a self-sustaining instantaneous rise in pressure and temperature that is faster than the sound speed in the material. It becomes self-sustaining once the temperature and pressure are sufficient to provide exothermic reactions behind the propagating reaction front. Such behavior is realized by incorporating oxidizing moieties such as nitrate groups in certain materials of the formation. Two examples known as RDX (cyclo-1,3,5-trimethylene-2,4,6-trinitramine) and HMX (cyclotetramethylenetetranitramine) are shown in Figure 1, which by and large are the most used energetic materials in the US DoD (Department of Defense). Note the oxygen balance of the molecules, which results in the self-propagating exothermic reaction behind the shock front.

Figure 1. RDX (cyclo-1,3,5-trimethylene-2,4,6-trinitramine, left) and HMX (cyclotetramethylenetetranitramine, right). Please click here to view a larger version of this figure.

One way to determine the speed of the detonation front is to monitor its position as a function of time. Fiber-optic detonation velocity (FODV) testing is performed to determine the detonation velocity of an explosive material. An acrylic fixture was designed to hold the explosive sample, and locate the optical fibers at known distances down the charge length. The standard test uses a 5-inch long by 0.75-inch diameter explosive sample with five total optical fibers; the bottom fiber is located 0.50-inch from the bottom of the charge and each successive fiber is located 1-inch above the next. The holes drilled in the acrylic fixture are two-stepped holes. The larger diameter hole is sized to fit the core and cladding of the optical fiber and the smaller diameter hole serves as a confined air space. As the detonation progresses through the explosive sample, the shock wave produced excites the confined air space producing a short, bright flash that can be observed with the fiber-optics.

The fiber-optics used for this test possess an inexpensive plastic core. Due to the destructive nature of the test and the consistency of the air shock, higher quality fibers were not found to be necessary to maintain high quality velocity data. The test facility at Picatinny Arsenal uses summed photodiodes to translate the light from the detonation into voltage. The amplitude of the voltage spike is unimportant for the purposes of this test. A 1-GHz oscilloscope is connected to the photodiode summing box, although that sampling rate is far beyond what is necessary for this test. The optical fiber “peaks” can be either determined by first rise of the signal or peak values. Given the distance between optical fibers and the time difference between detonation arrival, detonation velocity is then determined.

Detonation Pressure

Detonation pressure is estimated by measuring the dent depth in a standard steel plate resultant from the explosive’s detonation. Dent depths are well correlated to known pressure values for a variety of explosive compounds. Usually, since most explosives satisfy the Chapman-Jouguet (CJ) condition for a detonation to occur, the detonation pressure is typically referred to as CJ pressure, and it will be from this point forward in this article. The charge assembly is placed on top of a steel plate, called a “witness plate”, and the detonation results in a dent in the plate. The dent depth at the standard 0.75-inch charge diameter for numerous explosive materials with known detonation pressures is then compared to the test dent depth. Detonation pressure by the plate dent is a reliable method with many years of documented data for acceptable correlations. However, a detonation is a dynamic, fast chemical reaction, and in recent years it has become desirable to utilize tools with higher resolution to observe the pressure-time history.

To directly measure the detonation pressure of an explosive, Photonic Doppler Velocimetry (PDV) can also be used. This laser interferometer system was developed by Lawrence Livermore National Laboratory and utilizes a 1,550 nm CW laser source. By directing the laser at a moving target and collecting the Doppler-shifted light, the resulting beat frequency can be analyzed to provide a velocity trace of the target. Unlike traditional high-speed photographic techniques, these velocity traces provide a continuous record of the target’s velocity as a function of time. This measurement technique has gained significant attention in the last few years and is becoming ubiquitous in DoD and Department of Energy (DoE) explosive characterization labs.

In order to calculate the CJ pressure of a new explosive, a PDV system can be used to measure the particle velocity between the explosive and a polymethyl methacrylate (PMMA) window. A very thin foil, usually aluminum or copper, is placed at this interface to act as a reflective surface. This foil should be thin enough to prevent significant shock wave attenuation while being thick enough to prevent detonation light from passing through. Typically, a foil thickness of 1,000 angstroms is ideal for most experimental setups. Given the particle velocity in the PMMA and the detonation velocity of the explosive, the detonation pressure can be calculated with Hugoniot shock matching equations.6

While the FODV test at 0.75” charge diameter is an established standard at ARDEC, PDV-based tests are continually undergoing refinement. Depending on the explosive formulation, either one or both tests can be used to characterize detonation velocity and detonation pressure.

Protocol

CAUTION! The processing, handling, and testing of high explosives (Hazard Division Class 1 materials) should only be carried out by trained and qualified personnel. High explosives are sensitive to impact, friction, electrostatic discharge, and shock. Only use approved research and development facilities that can handle large quantities of Class 1 materials.

1. ARDEC Fiber-optic Detonation Velocity Test

- Cut optical-fiber to length using fiber-optic cutters and bundle in sets of five cables. Based on site-specific test chamber geometries, 15 meter lengths are typically used. Strip cable jacket material back 15 mm on one end of the bundle and 5 mm on the other end of the bundle. Polish the cut ends of the fiber-optics with P800 Grit Sandpaper to remove any burrs.

Note: Due to the destructive nature of this test, plastic optical fiber is preferred. Optical fiber properties are as follows; Polymethyl Methacrylate Resin (PMMA) core material (980 µm diameter), Fluorinated Polymer cladding material (1,000 µm diameter), 1.49 core refractive index, 0.5 numerical aperture. - Measure test sample and Composition A-3 Type II booster pellet diameters, lengths, and masses using a high accuracy caliper and balance.

Note: While the typical test uses 1.905 cm diameter by 2.54 cm length pellets, the test procedure can be used with any size pellet provided the plastic fixture holds the fiber-optic cable centered on each pellet. For the tests in this study, the 1.905 cm diameter pellets were used. - Load the explosive pellets, one by one, into the plastic fixture by expanding the tube’s inner diameter via prying the slot open. Record explosive pellet numbers and locations in the fixture. Then load the booster pellet into the tube from the top of the fixture.

- Place acrylic detonator holder on top of the booster pellet.

Note: RP-502 Exploding Bridgewire Detonators (EBWs) are typically used. Other detonators could be substituted, although re-calibration of the test would be necessary. - Insert the shorter exposed ends (5 mm) of the optical fibers into the two-stepped holes in the detonation velocity test fixture.

Note: The two-step holes ensure there is sufficient air for ionization upon passage of the detonation front which leads to a strong signal. The holes for the fixture should have a 0.021-inch diameter by 0.020 length inner hole against the explosive and a 0.042-inch diameter hole for insertion of the fiber-optic. If plastic fibers are used, light sanding of the outer diameter of the optical fiber may be necessary depending on both fiber diameter and test fixture tolerances. Ensure that the optical fiber is fully inserted (seated on the step in the two-stepped hole). - Glue/Epoxy the fibers in place. Use 5 min epoxy for this protocol.

- When the epoxy holding the fibers in has fully cured, position the acrylic tube containing the explosive pellets on top of the steel witness plate. Secure the text fixture to the steel plate with either a weight on top of it or tape. Ensure that there is not an air gap between the bottom surface of the last explosive pellet and the steel witness plate.

- Epoxy 360° around the test fixture, adhering it to the witness plate. After the epoxy has fully cured, place the detonator in the detonator holder that is at the top of the test fixture and secure it in place with tape.

- Transport the test fixture to the test chamber and insert longer exposed ends (15 mm) of the optical fibers into the photodiode summing box. Connect the photodiode summing box, or other data acquisition method, as appropriate, to an oscilloscope (1 GHz bandwidth is more than sufficient).

- Connect a firing line to the RP-80 detonator. Close all required doors/ports/etc. and conduct area lockdown operations per facility’s explosive test firing (standard operating procedures) SOPs.

- Confirm oscilloscope trigger, voltage/division, time/division settings. Connect the trigger out from the high-voltage fireset with a trigger threshold of 3.0 V to one channel on the oscilloscope. Connect the photodiode summing box to a second channel on the oscilloscope. Set both channels to 5 V/division and the timebase to 5 µsec/division, with a delay setting of -20 µsec.

- Detonate the item via high-energy fireset.

- Measure the peaks corresponding to time from the output of the photodiode summing box. From the oscilloscope screen trace, use peak voltages to determine specific times, although first rise may be a better indicator depending on the equipment used.

- Calculate detonation velocity from the five time points acquired from the oscilloscope. Since the spacing of each fiber-optic is known, calculate the detonation velocity by dividing the distance between each pin by the time between each peak (distance/time = velocity). The average and standard deviation are both reported.

- Calculate the depth of the dent in the steel witness plate by placing a calibrated steel bearing in the dent to find the minimum level, and then a depth gauge used to determine the depth.

2. Photo Doppler Velocimetry

- Machine a PMMA window sized to the diameter of the explosive charge approximately 6.5mm thick. Ensure that the window is optically clear and free of any machining defects. To accomplish this take an optically clear sheet of cast acrylic and machining out the disks using a laser cutter or similar machining process. Then, polish the PMMA to obtain an optically clear surface.

- Ensure that the aluminum foil thickness does not exceed 0.005” per manufacturer specifications. If the foil surface is pristine (specular), roll over the surface with a sanded stainless steel ball bearing. A diffuse surface results in optimal laser back reflection, even when alignment is slightly off.

- Use a thin, optically clear, acrylic-based adhesive tape to affix the aluminum foil to the PMMA window. Ensure that there are no air bubbles between the PMMA and the aluminum.

- Measure the explosive test sample pellet diameters, lengths, and masses. Use high-accuracy calipers and balance.

- Affix the explosive test sample pellets to each other to form a continuous charge, including any boosters (if necessary). Apply grease at each explosive interface during assembly to minimize air gaps at pellet interfaces.

- Mount detonation velocity pins into an acrylic fixture. These may be either optical fibers or piezoelectric pins. The locations of the pins with respect to the charge must be known.

- Affix the acrylic detonation velocity pin holder to the charge. Tape is sufficient to hold the acrylic pin holder to the charge. Typically, the fiber/pin locations are nearest the bottom of the explosive charge such that steady state detonation may be observed.

- Attach the detonator to the charge. Invert the assembled charge and stabilize it in this orientation to prepare for affixing the PMMA window. Place a small amount of grease on the explosive face to prevent air bubbles at the aluminum/explosive interface.

- Affix the foil side of the PMMA window to the explosive charge. If the window and charge are concentric, use tape circumferentially. If not, tape down the axis of the explosive charge.

- Once the PMMA window is securely attached to the explosive charge, affix the acrylic PDV probe holder to the PMMA window with tape. Insert the PDV probe into the PDV probe holder.

- Align the PDV probe in the holder with a back-reflection meter. This device outputs a low power laser beam and measures the back-reflection amplitude. A back-reflection of -10 dbm to -20 dbm is desirable. Epoxy the PDV probe in place once the back reflection has been determined to be optimal.

- Place the test item in the chamber and attach both the detonation velocity wires (fiber-optic or piezoelectric) and the PDV fiber. Connect a firing line to an RP-80 detonator. Close all required doors/ports/etc. and conduct area lockdown operations per facility’s explosive test firing SOPs.

- Confirm oscilloscope trigger, voltage/division, time/division settings. Confirm PDV system settings. Observe signal laser and reference laser amplitudes and modify as necessary.

- Detonate the item via high energy fireset. Save oscilloscope traces for both PDV data and detonation velocity data.

- Analyze the PDV data in relevant data analysis program. The raw PDV signal must be processed using an Fast Fourier Transform (FFT) based analysis package.

Note: By looking at the frequency content of this raw signal, and knowing the initial frequency of the light source (1,550 nm), the FFT analysis package produces a velocity spectrogram that plots the recorded velocity as a function of time. In this case, PlotData, a proprietary United States Department of Energy graphical user interface (GUI), is used in conjunction with LabView software to carry out the FFT. However, many commercially available analysis packages exist that are capable of performing these tasks. - Calculate detonation velocity from the five time points acquired from the oscilloscope. Since the spacing of each fiber-optic is known, the detonation velocity is calculated by dividing the distance between each pin by the time between each peak (distance/time = velocity). The average and standard deviation is reported.

Representative Results

A typical setup for PDV is shown in Figures 2 and 3, while the FODV setup is shown in Figure 4. Upon detonation, the resulting dent plates from traditional FODV shots are shown in Figure 5, with the position/time results of PAX-30 and PBXN-5 in Figure 6. Both materials possess similar detonation velocities (the slope of the line), with PAX-30 ~0.4 µsec/mm slower. While it may not seem to be a significant difference, it is indeed in light of the fact that PAX-30 possesses nearly 20% less by weight explosive fill. Detonation velocity is not the conclusive test to quantify the aluminum reaction in the or immediately after the detonation front, but it can give a preliminary assessment of aluminum reaction.

Figure 2. A typical PDV setup. The explosive pellets or casted sticks are stacked. Please click here to view a larger version of this figure.

Figure 3. PDV setup (close view). The PDV setup at the base. Please click here to view a larger version of this figure.

Figure 4. FODV setup. The stick is epoxied on the steel witness plate to ensure a solid contact and upright stance during setup. The detonator and booster are at the top of the stick. Please click here to view a larger version of this figure.

Figure 5. Dent from FODV test. The dent is measured with a calibrated depth gauge or a profilometer. Please click here to view a larger version of this figure.

Figure 6. Detonation rate calculations. Each data point is from the fiber-optic pins in the FODV setup. PAX-30 R2 = 0.999717, RMSE (root mean square error) = 0.519693; PBXN-5 R2 = 0.998778, RMSE = 1.342272. Please click here to view a larger version of this figure.

| Explosive | n | Detonation Velocity (mm/μsec) | CJ Pressure (GPa, Plate dent) |

CJ Pressure (GPa, PDV) |

| PBXN-5 | 3 | 8.83 ± 0.12 | 37.9 ± 1.4 | 34.7 ± 0.0 |

| PAX-30 | 3 | 8.48 ± 0.04 | 32.3 ± 1.3 | 30.5 ± 0.3 |

Table 1. Performance data from experiments. n is total number of tests, each with 5 fiber-optic pins. The PDV CJ Pressure consists of one test only.

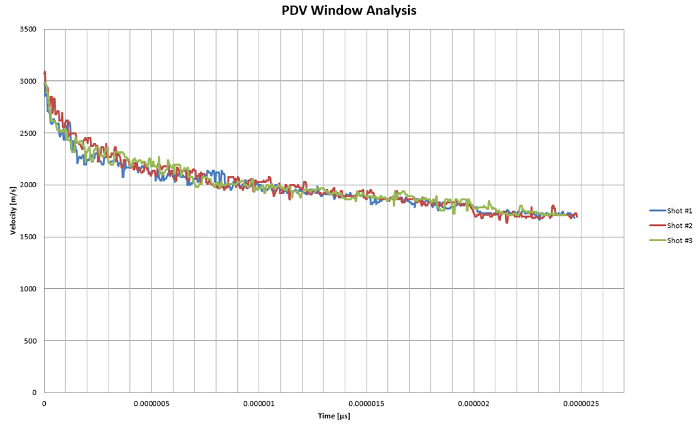

The output of the PDV trace from the bottom of the explosive charge of Figures 2-3 is shown in Figure 7. The CJ pressure is calculated from modeling the product gas Hugoniot with Cooper’s approximation,6 and then extrapolating the CJ point once the PMMA-explosive Hugoniot is matched. A typical screen print from such a calculation is shown in Figure 8. The technique still has some limitations since the calculations assume a linear extrapolation from the beginning of the window velocity trace. This results in slightly underestimating the pressure, as evidenced by the results (Table 1).

Figure 7. Window velocity as a function of time for the measurement of CJ pressure. Note the excellent agreement between the different shots, where the traces practically fall on one another.Please click here to view a larger version of this figure.

Figure 8. Calculation of the CJ pressure from the PDV experiment. Note that the extrapolation assumes a linear acceleration in the initial push of the window which currently leads to an underestimation of the CJ pressure. Please click here to view a larger version of this figure.

Figure 9. Depiction of the expansion isentropes for reacted and unreacted Aluminum in the detonation products. The blue straight lines are the tangent solutions that are proportional to the detonation velocity. Note the reacted Al products solution force the detonation velocity to be lower than the unreacted Al solution. Please click here to view a larger version of this figure.

Discussion

Note the calculated pressure differences between the two explosive formulations. The aluminized explosive exhibits less pressure, partially due to less nitramine (HMX) loading, but also because the aluminum reacts with oxygen in the expanding detonation gases, which results in a smaller dent from a lower detonation pressure. The PBXN-5 exerts a higher detonation pressure due to its higher gas content upon detonation compared to PAX-30 (36.2 moles/kg for PBXN-5 versus 33.1 moles/kg for PAX-30). More advanced equations of state (EOS) derived from wall velocity measurements are used to describe the conditions of the explosive products at such extreme temperatures and pressures.10,11 This will be the subject of future manuscripts.

It was apparent that when early reaction of a metal in an explosive occurs, the detected detonation velocity is lower than if the metal does not react. This is somewhat counterintuitive; one would expect the velocity to increase if more energy deposits into the expanding detonation front due to exothermic reaction of aluminum. The decrease in detonation velocity arises from solutions to the pressure-density Hugoniots. The specific volume (inverse density)-pressure isentrope denotes changes in as products from the detonation expand (from left to right in Figure 9).6 The expansion isentrope represents those detonation products that can thermodynamically form and expand along the pressure-specific volume curve. During the course of expansion, if the aluminum reacts to form oxidized species, it results in an overall decrease in density of gas and leads to a lower velocity. This is manifested in an expansion isentrope below the solution for the non-reactive aluminum (Figure 9). Since the detonation velocity is the tangent line intersecting the isentrope from the starting density on the x-axis, it is apparent the detonation velocity must decrease when the aluminum in the formulation reacts.

In summary, the United States Department of Defense continues to actively pursue applied research and characterization of new energetic materials with both traditional and novel technologies. In the case of PDV, it is a valuable tool that characterizes explosives with extreme accuracy and provides the researchers with valuable insight into the explosive effectiveness. This rapid test cycle greatly decreases cost and the time needed for formulation optimization and requirements verification.

Disclosures

The authors have nothing to disclose.

Acknowledgements

The authors would like to thank the Future Requirement of Enhanced Energetics for Decisive Munitions (FREEDM) Program for funding, Mike Van De Waal and Gerard Gillen for their assistance in testing, Paula Cook for formulations assistance, and Ralph Acevedo and Brian Travers for pressing of the samples.

Materials

| cylcotetramethylenetetranitramine | BAE | Class 5 | 1.1D, High Explosive |

| Aluminum | Valimet | Proprietary | |

| Viton | 3M | ||

| Grease | Dow Corning | Sylgard 182 | Gap sealer |

References

- . Title 10, Chapter 141, Section 2389. United States Code. , (2001).

- Anderson, P. E., Cook, P., Davis, A., Mychajlonka, K. The Effect of Binder Systems on Early Aluminum Reaction in Detonations. Propellants, Explosives, and Pyrotechnics. 38 (4), 486-494 (2013).

- Trzcinski, W. A., Cudzilo, S., Paszula, J. Studies of Free Field and Confined Explosions of Aluminum Enriched RDX Compositions. Propellants, Explosives, Pyrotechnics. 32 (6), 502-508 (2007).

- Volk, F., Schedlbauer, F. Products of Al Containing Explosives Detonated in Argon and Underwater. , (1995).

- United Nations. . Recommendations on the Transport of Dangerous Goods—Tests and Criteria, revisions adopted by reference (A.1), ST/SG/AC.10/11. , (2013).

- Cooper, P. W. . Explosives Engineering. , (1996).

- Chapman, D. L. On the rate of explosion in gases. Philosophical Magazine Series 5. 47 (284), 90-104 (1899).

- OT, S. t. r. a. n. d., Goosman, D. R., Martinez, C., Whitworth, T. L., Kuhlow, W. W. Compact system for high-speed velocimetry using heterodyne techniques. Review of Scientific Instruments. 77 (8), (2006).

- Manner, V. W., Pemberton, S. J. The role of Aluminum in the Detonation and Post-detonation expansion of Selected Cast HMX-Based Explosives. Propellants, Explosives, and Pyrotechnics. 37 (2), 198-206 (2012).

- Baker, E. L., Stiel, L., Balas, W., Capellos, C., Pincay, J. Combined Effects Aluminized Explosives. , (2008).

- Stiel, L. I., Baker, E. L., Capellos, C., Furnish, M. D., Elert, M., Russell, T. P., White, C. T. Study of Detonation and Cylinder Velocities for Aluminized Explosives. Shock Compression of Condensed Matter – 2005. AIP Conference Proceedings. 845, 475-478 (2006).